AI in software development: performance amplifier or chaos accelerator?

Every time a new technology arrives on the software engineering scene, we're promised the same thing: faster, better and cheaper. We've seen it with the cloud, open source, and CI/CD. But this time, with generative artificial intelligence, we're not just talking about a new tool: we're talking about a genuine paradigm shift.

Just five years ago, AI for software development was limited to basic code autocompletion. The debate is no longerwhether AI will transform the daily lives of your development teams, but rather how quickly it will redefine their performance.

For engineering managers and team leaders, the challenge now goes beyond simply distributing GitHub Copilot licenses. It's about framing a strategic adoption of AI in development processes, where speed is not an obstacle to the quality of your product.

In this article, we explore:

- Why AI multiplies your strengths (and weaknesses)

- How to know if your teams are really using AI in their work

- How to forget useless metrics to measure AI's true impact on your delivery

- The pillars for successful adoption and maximizing the impact of AI in your development teams

- How Nexapp and Axify can help you navigate this organizational transformation from technical trial to business success

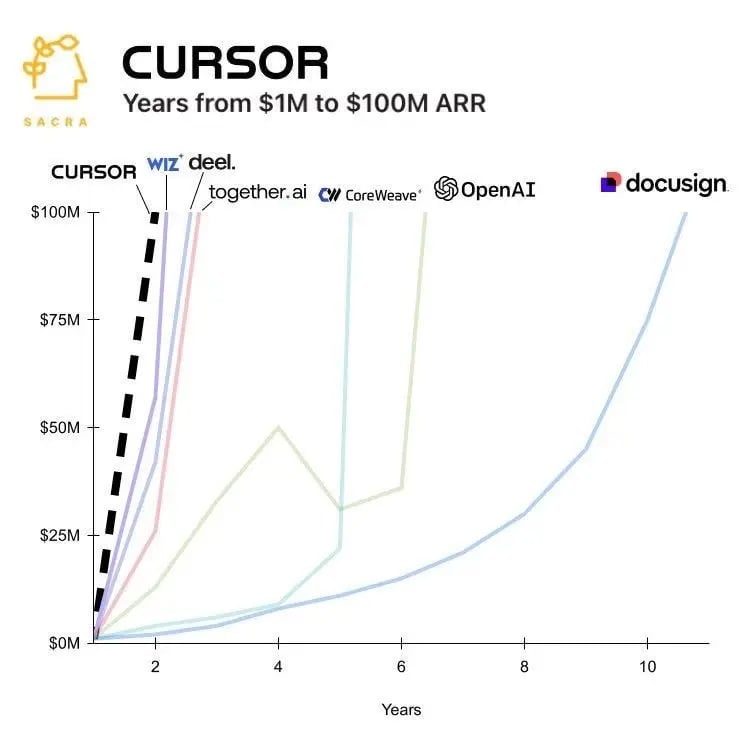

Overview of AI adoption in software development

Today, the adoption of artificial intelligence is no longer an option for software engineering teams; it's a matter of competitive survival. For proof of this, we need only look at how quickly the tools are catching on. Code editor Cursor, for example, has grown from $1 million in ARR to $100 million in less than 2 years. This meteoric rise shows that developers aren't just "testing" AI: they're integrating it massively into their daily workflows.

But beyond the hype, what does the actual data say? This is where the latest report from the DORA group(State of AI-Assisted Software Development, 2025) becomes essential.

Why rely on DORA data?

For those unfamiliar, DORA (DevOps Research and Assessment) has been the global benchmark for measuring the performance of technology teams for over 10 years. Their rigorous methodology, based on thousands of organizations, measures the actual delivery performance of development teams and highlights the practices that enable the best teams to become even better, year after year.

What the DORA report says about AI in software development

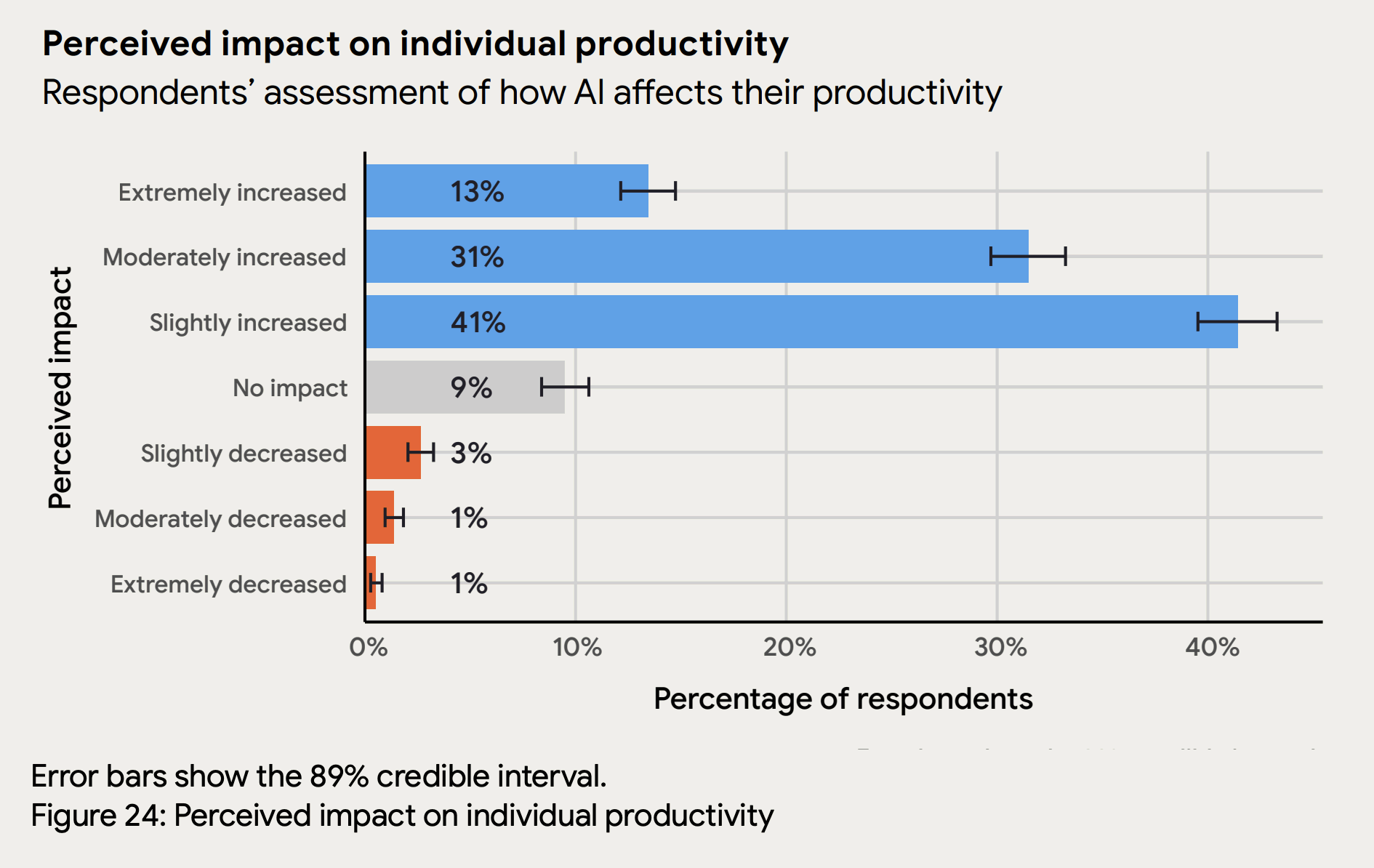

The latest data are unequivocal:

- 90% of developers now use AI in their daily tasks.

- 59% of them are already seeing concrete improvements in the quality of their code.

Yet one question remains for many managers: does AI really make your organization more efficient, or does it simply create an illusion of productivity? To move from a passing trend to a sustainable performance strategy, you need to know where to look.

The goal of AI adoption in your development teams should always be twofold: to speed up delivery while improving quality. You can't have one without the other. If you gain speed but your bug count explodes, you're not performing better: you're simply creating debt faster. This brings us to the amplifying effect of AI on development teams.

AI: a powerful amplifier of your development teams' strengths (and weaknesses)

The central idea of the DORA report on AI-assisted software development is that artificial intelligence doesn't create competence out of thin air, nor does it fundamentally transform the way you deliver software. It doesn't fix your processes, repair your technical debt or clarify your priorities. Rather, it acts as an amplifier. It gives wings to teams that already have rigour, but it can also mask (or, worse still, amplify) the problems of technical debt and fragile processes in others.

- For a mature team: It eliminates repetitive tasks (boilerplate code, simple unit tests), freeing up time for architecture and complex problem-solving. The result? Exploding velocity without sacrificing stability.

- For a team in technical debt: It can generate code at such speed that code review becomes a bottleneck, increasing debt and security risks. The result? AI will generate average code at record speed.

In fact, according to the DORA report, teams with mature processes see their throughput increase (PR volume up 98%), while disorganized teams simply see their code review time explode (+91%) and their bug rate rise (+9%).

Basically, if you give an F1 engine to a driver who doesn't know his blind spots, he'll just hit the wall faster. AI therefore requires us to redouble our efforts on the solid foundations of software engineering. And, above all, to measure its impact on the software development cycle in a methodical way.

How can you measure the impact of AI on your software development cycle?

Deploying code assistants represents a major investment, both in terms of licenses and training time. To ensure that this investment pays off, it's important not to confuse usage with performance. The key lies in a two-step analysis:

- Measuring adoption

Are your teams really using AI-assisted programming tools, and how? - Correlate adoption with impact

What are the concrete effects of this usage on your speed and quality?

Without this distinction, it's impossible to know whether AI is really helping you or just adding noise. Let's start by looking at how to validate that AI has indeed taken root in your development teams' day-to-day work.

Step #1: Measure AI adoption in your development teams

You're probably familiar with the cost of Cursor or GitHub Copilot licenses, but do you know whether your development teams are actually using AI in their day-to-day work? In fact, the number of licenses distributed says nothing about the use to which they are put. Having access to AI doesn't necessarily mean adopting it.

According to DORA's AI-assisted programming report, adoption is based on three essential dimensions which, together, reveal the AI maturity of your teams:

- Reliance: What percentage of tasks (code, testing, documentation) is actually assisted by AI?

- Trust: What is the acceptance rate of generated suggestions? If your developers reject 90% of suggestions, the tool becomes a source of distraction and friction rather than a gearshift.

- Reflexive use: Is AI naturally integrated into the workflow, or is it used only occasionally for isolated tasks?

It's this combination that determines whether the tool has become part of the team's DNA or just an expensive gadget.

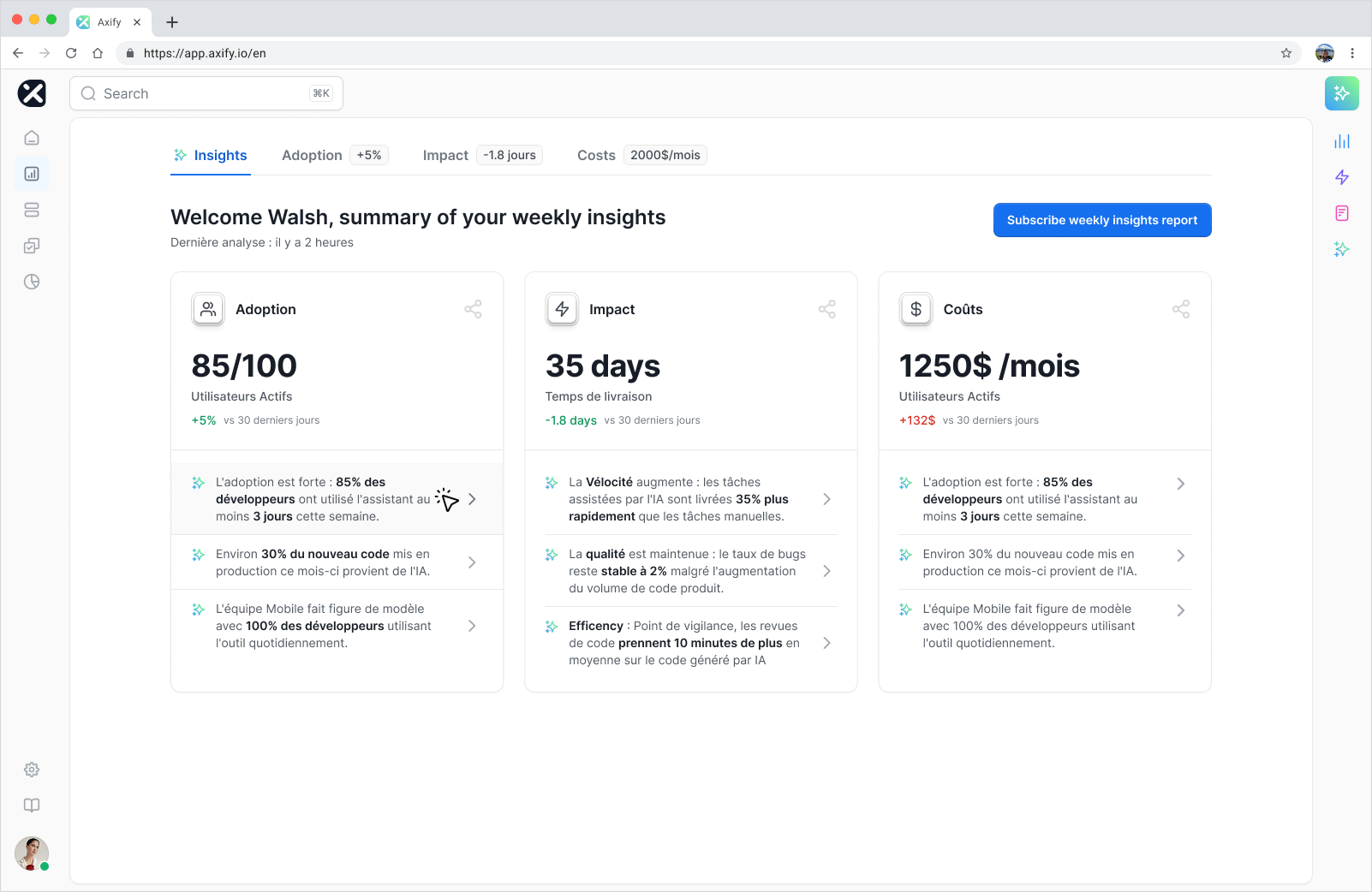

At Nexapp, we've developed Axify, a delivery intelligence solution that gives IT managers complete visibility into their teams' performance, helping them make informed decisions. Instead of relying on informal impressions or surveys, Axify connects directly to your tools' APIs (such as GitHub Copilot) to centralize data across all three dimensions of AI adoption in software development.

By centralizing these signals, the platform enables you to visualize adoption in real time. You get a clear picture of who's using AI and how it fits into your current processes. This is the indispensable basis for subsequently analyzing the real impact on your performance.

Step #2: Correlate adoption with delivery performance

Organizations are multiplying AI initiatives. New tools are appearing every week, and no one wants to "miss the train". But beware: enthusiasm often outweighs real understanding of impact. The question on every engineering leader's lips is: Are we really tapping the full potential of AI?

Many managers fall for "vanity metrics". We're delighted to see 30% more code merged this month, but is it good code? Has it created more bugs in production? To determine whether AI is propelling or hindering your workflow, look at three pillars: speed, quality, and developer experience.

Measuring AI's impact on delivery speed

Are you really delivering more value, faster, thanks to AI? The classic mistake is to measure the impact of AI by the number of lines of code produced. If more code automatically meant more value, all you'd have to do is hire more hands to code, but we all know it doesn't work like that.

According to Google's State of DevOps 2024 report, high-performance teams deliver 127 times faster than others. And that's not because they type faster, but because they optimize their entire workflow. If coding faster simply fills your code review backlog or creates downstream bottlenecks, you've gained nothing.

That's why, at Nexapp, we prefer delivery time as a measure of speed, a universal measure of the time it takes to go from idea to production. As it encompasses the entire value chain, it's the one that reveals whether "coding faster" translates into real benefit for your users.

Measuring the impact of AI on code quality

We all want to deliver faster, but at what cost? Producing code at lightning speed is pointless if it adds to your technical debt. AI can generate code that seems to work, but lacks architectural vision and robustness.

To assess whether AI is pulling your quality up or down, the DORA metrics measured in real time in Axify remain your best benchmark:

- Rework rate: Is AI-generated or AI-assisted code rejected or corrected more often during testing or code review?

- Change failure rate: Is the stability of your application decreasing? An increase in this rate is often a sign that the AI has introduced regressions that your tests missed.

Measuring the impact of AI on the developer experience (DevEx)

Finally, AI must not become a source of stress. Successful adoption is reflected in your troops' morale. AI should remove friction, not impose an insurmountable cognitive load on code reviews.

Ask yourself (and them) these questions:

- Do your talents feel more productive or overwhelmed by the flow of suggestions?

- Does AI free up time for strategic thinking, or does it force senior staff to spend their day correcting code generated by juniors?

Developer engagement is the key to retention. If AI improves their daily lives, the impact on the organization's overall performance will be tenfold.

How can you maximize the adoption and impact of AI in your development teams?

Organizations that win the AI game see it as a lever for delivering more value, sooner. Losers see it simply as a means of reducing short-term labour costs. To be in the first group, adoption needs to be structured. Here are our tips, from the field, for turning your AI initiatives into measurable business successes.

The 7 winning conditions for successful AI initiatives

To turn AI into a competitive advantage and maximize your initiatives' chances of success, build on a solid foundation.

- Clear corporate position

Define authorized tools and expectations. AI must be used to deliver value, not just reduce costs. - Reliable data ecosystem

AI performs best when it has access to your internal context (e.g., documentation and decision history). - Rigorous version management

As code volume increases, rollback capability and branch stability are non-negotiable. - Development in small increments

Work in small batches to facilitate human review of AI suggestions. - User-centered approach

AI must solve a problem for the customer, not just generate code. - High-quality internal platforms

Provide safeguards, such as automated testing, to ensure AI deployments are safe. - VSM (Value Stream Management)

VSM ensures that AI's productivity gains are not absorbed by operational or technical bottlenecks.

Tip: End-to-end delivery teams work more on what really creates value, improve product performance and channel AI gains towards systemic problems, rather than generating isolated gains absorbed by bottlenecks. Without VSM, AI can create local efficiencies that bring no real value to the organization.

Deployment strategy: start with model teams

Don't try to transform the whole organization at once. As AI is an amplifier, it will work best where processes are already in place. The aim is to create replicable success stories. Choose teams:

- Stable and technically mature.

- Supported by strong leadership.

- Motivated by continuous improvement.

Our Axify tool evaluates your teams according to 4 performance pillars. This enables you to identify at a glance the best-performing teams, ready to become your AI "pilots".

Remember: A fragile team, amplified by AI, becomes even more fragile. A strong team, amplified by AI, becomes an army of productivity.

Redefining the role of the modern software developer

AI doesn't replace the developer, but it does change the developer's role. Freed from some of the repetitive work, the modern software engineer can devote more energy to what creates the most value: designing solid architectures, exercising a critical eye on the results generated, and solving complex problems through technology. We recommend :

- Modernize code review: Before, code review often focused on detecting syntax errors or typos. With AI, this thankless task disappears. The human reviewer must now act as a technical curator. His role is to ensure that the code generated respects the overall architecture of the project (which the AI doesn't always see), is maintainable by other humans and doesn't solve a local problem by creating another one elsewhere.

- Reinforce pair programming: the most successful teams use AI to rapidly explore solutions, but they institute strictvalidation protocols to counteract "technical hallucinations" (when the AI invents a library or method that doesn't exist). Human peer programming is the best way to keep a critical eye on the AI's sometimes over-convincing suggestions.

- Never accept without understanding: An AI suggestion shouldnever be merged if the developer is unable to explain precisely the "why" of each line. The more code the AI generates, the sharper the human eye needs to be to ensure its continuity. If a developer doesn't understand the code he delivers, he won't be able to debug it later. The longevity of your product depends on this rigour: the human remains solely responsible for the technical debt he agrees to merge.

As a leader, making the most of these practices can transform AI into a real lever for quality.

Set measurable (and ambitious) objectives

Don't aim for "more AI". Aim for clear business results. Use DORA metrics or overall delivery time as starting points. These are the indicators that will tell you whether your AI investment is really transforming your ability to innovate.

Why AI adoption needs a strategic ally

Moving from sporadic AI use to a sustainable productivity driver requires more than just a software subscription. At Nexapp, we believe that AI is not something that can be implemented, but rather tamed. That's why we work on two essential fronts to secure your investment:

Infusing best practices through co-development

Adopting AI is first and foremost a cultural change. By working in co-development with our experts, your teams don't just watch tutorials: they learn in the heat of the action.

Our experts integrate directly with your teams to :

- Pass on the right reflexes: Learn to "prompt" with context, validate suggestions and avoid common pitfalls.

- Establish safeguards: Set up code review processes and quality standards to maintain healthy code, even as production volumes increase.

- Guarantee sustainability: Ensure the speed you gain today doesn't become a millstone around your neck tomorrow.

Monitor results in real time with Axify

You can't improve what you don't measure. We use Axify to understand the real impact of AI on the software development cycle, and to give managers full visibility of the transformation underway.

Axify enables you to :

- Monitor the code base's health: Is stability maintained despite acceleration?

- Measure real velocity: Ensure productivity gains reach the end user and don't get stuck in your revision queues.

- Fine-tune: Quickly identify which teams have mastered the tool and which need additional support.

- Make better decisions, faster: Axify's AI agents analyze data from your tools, detect important signals and suggest next steps.

Combining human guidance with data accuracy turns AI adoption into a strategic growth lever for your business.

Conclusion: AI at the service of your performance

AI is a race. But it's a marathon, not a sprint. The goal is not to be the first to integrate AI throughout the development cycle, but rather to strategically target the points where AI can deliver real productivity gains. And it can really pay off! On a team of 40 developers, reducing your time-to-market from 6 to 3 months can save $4 million and double the number of full-time equivalent (FTE) developers.

AI won't replace your developers tomorrow morning. However, a team that knows how to use it intelligently will leave the competition far behind. Teams that master AI will replace those that don't.

The important thing is to stay in control. Don't let AI create chaos or increase your technical debt. At Nexapp, we help you avoid the pitfalls of hasty adoption and turn AI into a real performance lever within your development teams. And thanks to our Axify tool, we can help you measure the real impact of your initiatives and optimize your development cycle.

Want to know if AI is really creating value in your teams?

Try Axify and finally measure the real impact of AI on your delivery performance.

Want to integrate AI with confidence and impact into your development teams?

Contact our experts for context-specific advice on AI adoption.

Power your teams with AI

Nexapp supports you in deploying AI in your engineering practices to make it a real performance lever within your development teams.

Continue reading

AI in Software Development: How Does It Impact Your SDLC?

AI is Not Just a Tool, It's Your New Teammate: From Social Influence to Transparency

AI in software development: performance amplifier or chaos accelerator?